I’ve been attending lately (and having) to some talks about the logs parsing from the SEO perspective, (from @David Sottimano on Untagged Conference and Lino Uruñuela during some dinner time), and I’ve decided to publish a WordPress plugin that I started to work on some years ago, and that for work reasons I had it left on my “I’ll do it” drawer and it never came back to my mind.

First thing I need to the point to, is that this is a BETA PLUGIN, so please careful of using it on a high load trafic or on a production site. I’ve running on this site for 4 days without any problems, but that doesn’t mean it’s free of bugs. Let’s consider this plugin for now as a proof of concept.

The main task of the plugin is to register the search bots visits to our wordpress site into Google Analytics, using the Measurement Protocol.

The working flow of the plugin is easy, it just checks if the current visiting User Agent is matching any known Crawler, and based on that info we’re sending a pageview to some Google Analytics Property. Please take in mind that it’s recommended to use a new property since, we’re going to use a lot of custom dimensions to track some extra info beside the visited pages =)

I used to had my own User Agents parser, but I ended using another well stablished (and for sure more reliable) library. When something works there’s no need to reinvent the wheel :). So this pluggin uses the PHP library for the uap-core project.

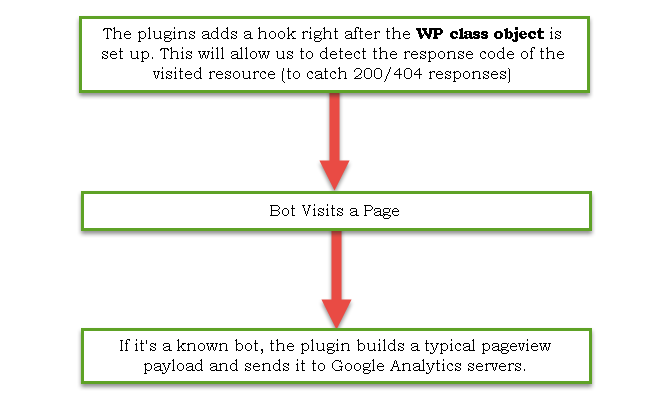

Let’s see a simple flow chart about what the plugin does:

I’m sure this was easy enough to understand. But don’t only want to check what pageviews were visited by a search bot, no we’re going further and we’ll be tracking the following:

And for sure you may find replies to a lot of more questions, since we’re using Google Analytics to track those visits, we’ll able to cross any of the dimensions at our needs.

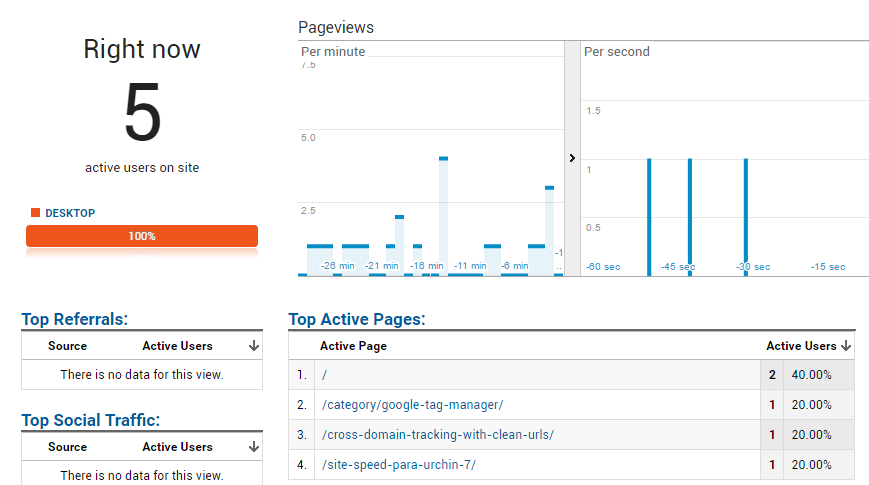

Another cool thing of tracking the bots crawls within the Measurement protocol, is that we’ll be able to watch how our site is being crawled in the real time reports! 🙂

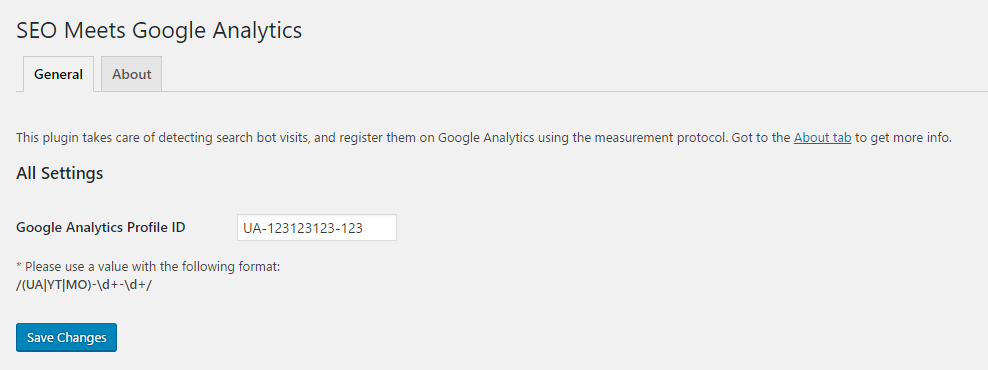

Setup

You’ll just need to download the plugin zip file from the following url, and drop it in your WordPress Plugins folder and configure the Google Analytics Property ID to where you want to send your data.

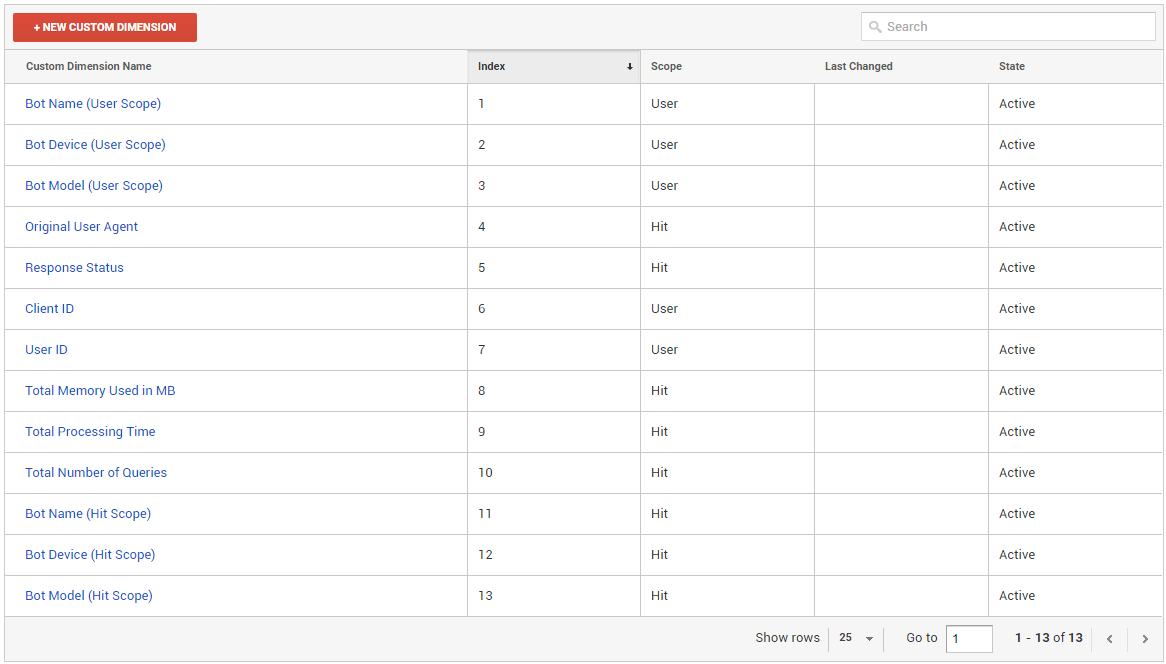

Used Custom Dimensions

You may be wondering why do we have the same bot info related dimensions duplicated and with a different scope, this is why because as I explained before we’re using the bot IP address to build up a clientID and an userID, and it may happen that Google uses the same ip for different bots (like for Desktop or Featured Phone). This way we can have the hit level info too in the case that user scope data get’s overriden 🙂

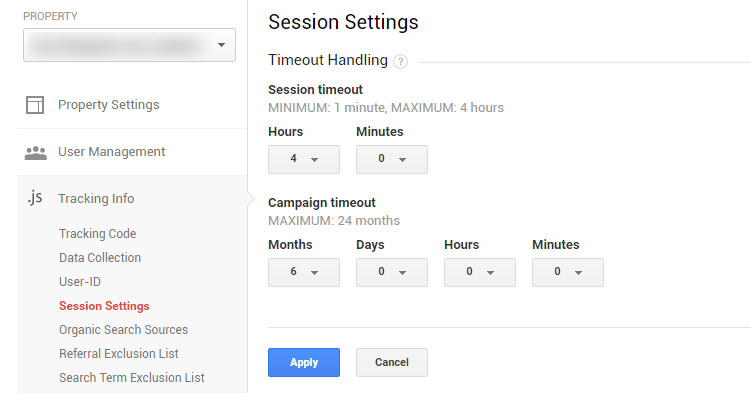

Another thing we may want to do, is to setup the session timeout limit to 4 hours within our profile configuration. Bots Crawls are not done the same wht as an user navigates the page, and we may be getting 2 pages hits per hour, so the default 30 minutes timeout makes not sense at all.

Let’s know see how the reports will look on Google Analytics 🙂

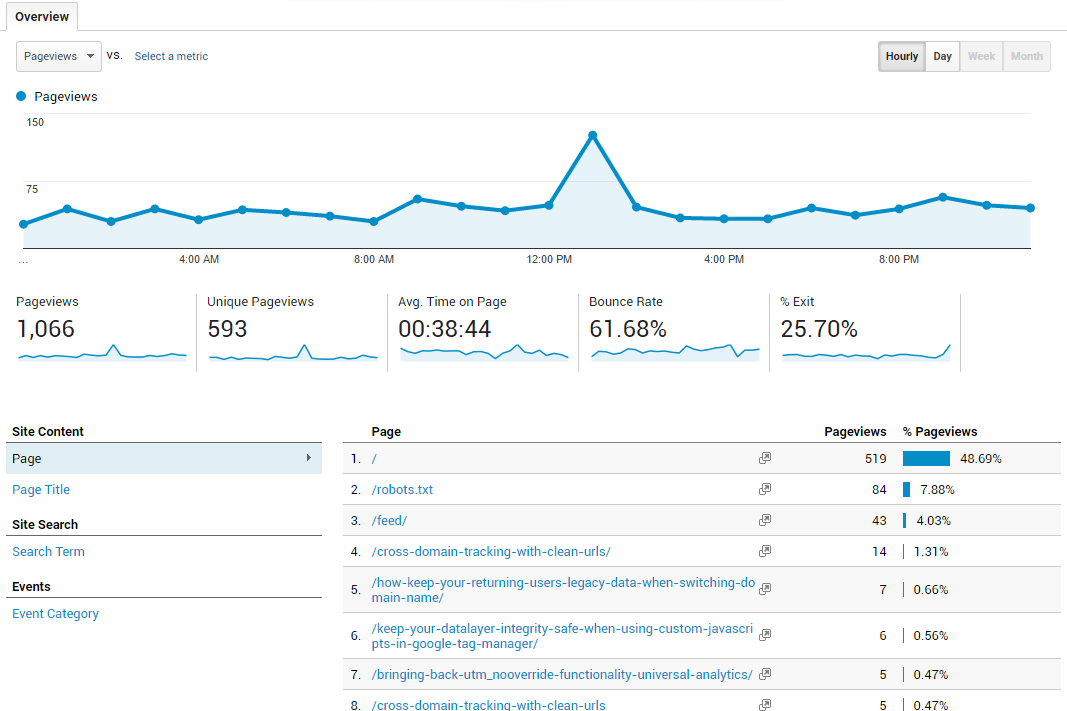

Consumed content by bots with an hourly breakdown

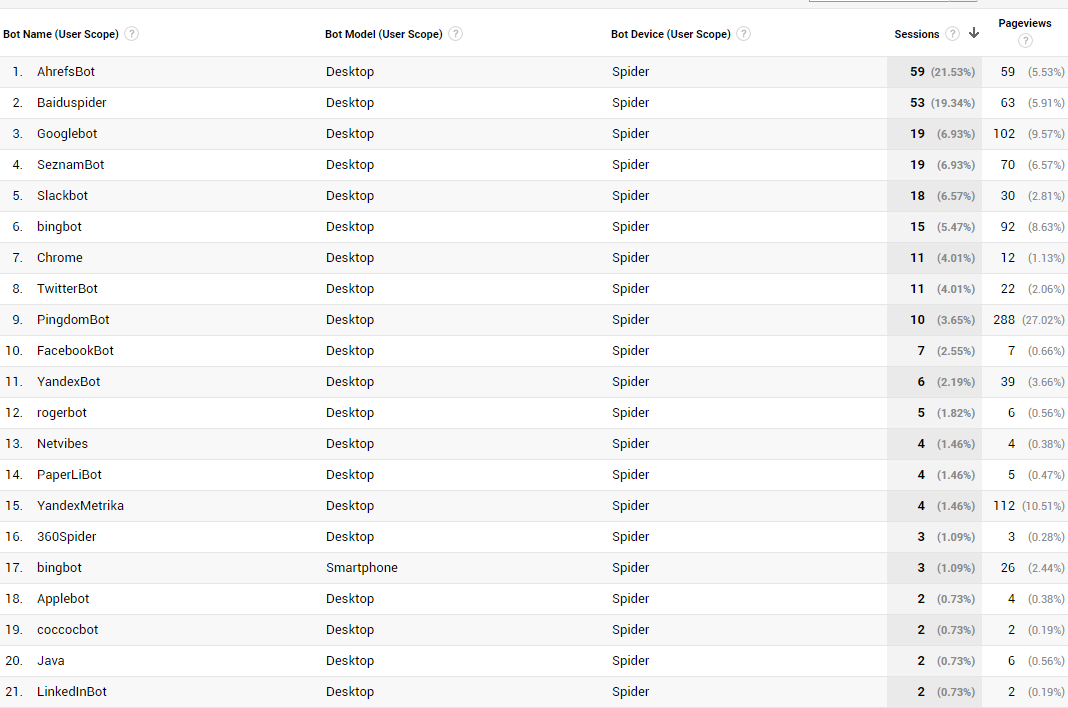

Total sessions and pageviews by search bot

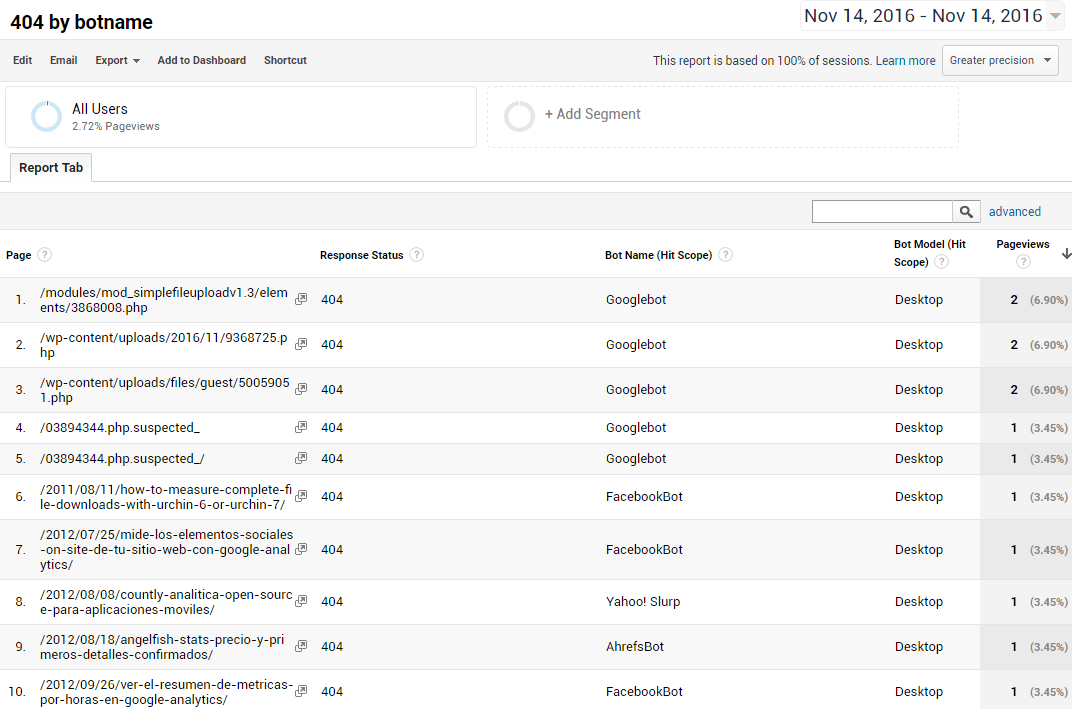

Pages that returned an 404 and which bot was crawling it

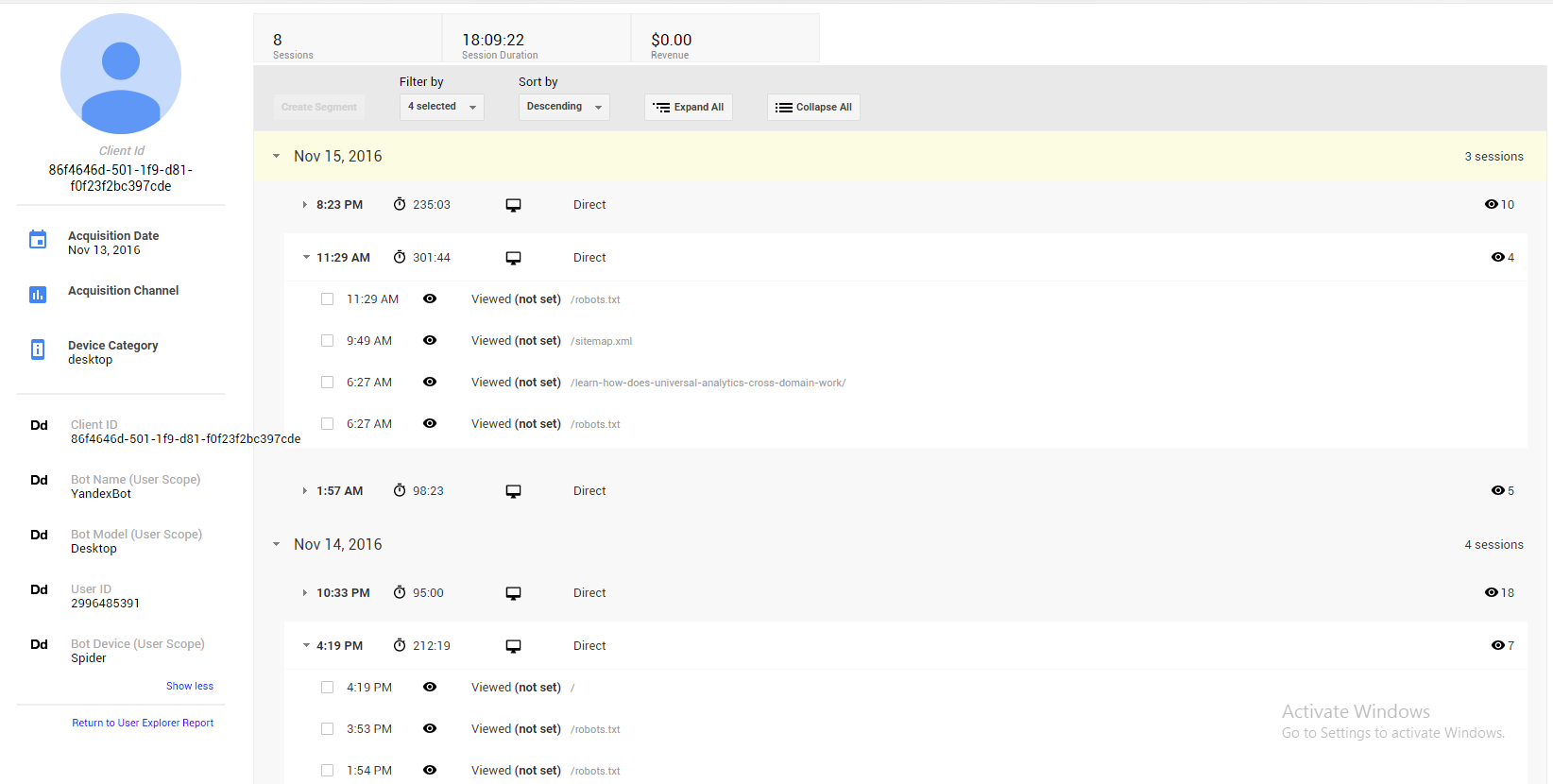

Which pages did a certain bot crawled (User Explorer Report)

You can get the plugin from the following GitHub repository:

https://github.com/thyngster/wp-seo-ga

If you are unable to run the plugin, please drop me a comment on this post or open an issue on GitHub and I’ll try to take a look to it.

Any suggestions/improvement will be very welcome too 🙂